Vision Guided Robotic Systems:

Eyeing Automated Solutions

By Eric R. Bessette

Sales Engineer

INTRODUCTION

As the automated robotics field continues to grow and innovate the various ways that products are delivered to the consumers of the world, one must take note of the awe inspiring revolutions occurring within the field. Collaborative robots have taken safety to a new priority level by designing units with fail safe stops upon contact. Programming has become unfathomably simple while pendants containing the robots graphical user interface (GUI) resemble mass market tablets more and more every year. Among all of these improvements the most exciting is the development of in-depth vision systems which can differentiate between many different items and command the robot to organise them to the programmer’s bidding.

What are Vision Guided Robotic Systems (VGRS)?

Researchers have have long envisioned the potential applications of digital cameras when it comes to inspection of products. The major impediment in the fruition of a vision system was the fact that the digital camera is very good at capturing data but does not have the functionality necessary for in-depth analysis of said data. Recent innovations in image analysis algorithms have made it possible to discern objects from one another automatically. Combining this technology with modern safety first robotic arms results in creating novel automation opportunities. This allows the potential to revolutionize manufacturing processes, improving repeatability, cycle rate, reliability and safety on the plant floor, while reducing costs associated with labor. The anatomy of the vision system is simple and is comprised of three key areas of interest; lighting, the digital camera, and the tools offered from the controller. Integration for the manufacturing processes of a particular company requires careful consideration of all three.

1. Lighting

Lighting is the most important factor concerning the functionality of a vision system and is usually the one most overlooked by integrators. One must take into account the desired result when capturing visual data. Is the shape of the workpiece under scrutiny or are we concerned with surface variations. This will aid in deciding whether the workpiece should be front lit, back lit, or if low angle lighting is necessary. Next, one must evaluate the backdrop of the shot and its relationship to the workpiece. This includes taking note of the color and surface texture of both. Depending on the intended result of the measurement, colored LED’s may have to be used in order to accentuate the area of interest. One may also be required to adjust the angle of the camera in relation to the light or add filters, such as a polarizer or a diffuser, in order to correct for glossiness, shine, or to improve contrast. Integrators must confirm that once the lighting has been resolved, factory/warehouse conditions will not affect the VGRS after installation.

2. Camera

After the optimal lighting configuration is addressed, camera placement and type is then given consideration. Placement is important due to previously mentioned lighting difficulties and the proper angle to insure the area of interest is captured. The type of camera (or cameras) will be selected by accounting for the functions the application requires. Choosing if the camera is color, black and white, line scan, high resolution, and/or 3d imaging is born from understanding the root goal of the vision system setup and attempting to provide as much dependability as possible without paying for unneeded features. The workplace must be controlled so that vision system remains in optimal working condition. Every contributing element in the environment, including lighting, product color changes, airborne chemicals, must be considered and tested. Later I will highlight some applications of vision guided robotic systems to demonstrate how each type of camera can be used for different situations.

3. Controller Tools

Once the image is captured, it is converted into a digital signal and sent to a controller. This controller offers a host of inspection tools which are displayed on a monitor and navigated with a mouse. This is the part of the process in which the integrator gives meaning and direction to the information. By utilizing the proper pre-programmed tools and settings in response to a chosen stimuli, the integrator is able to coordinate the vision system with a robotic arm in the most remarkable ways. Whether it is utilized for quality assurance, palleting, sorting, reloading or any other function, the steps to accomplish the programming of tasks are straightforward. Controllers can coordinate with up to four different cameras, and all can be using different inspection tools prompting different responses from multiple collaborative robotic arms. The different inspection tools range from barcode readers, to specification measurements and even includes an auto teach function which can be utilized to learn what a good part is by analyzing thirty or more good parts and making a distribution of acceptance. Workpieces of differing physical appearance can be handled in different ways, even if using a one camera one robotic arm setup. The object's orientation will not affect the camera's ability to recognize it. If consistent orientation of the object is required, vision guided robotic systems can redflag and/or adjust outliers to a predetermined angle of skew. 3D renderings can be made to confirm consistency of shape and design. With these tools and the continued improvement of safe robotic arms, the potential for VGRS is undeniably compelling.

How they can impact Business?

Investment in VGRS can be a big step for a company. Automation has been continuing its proliferation through the manufacturing world for some time now and as new innovations are introduced into the market we find more potential for improving our processes. Collaborative robots paired with smart vision systems is just such an innovation.

4. Improving Productivity

In the past it has been apparent that although a particular job was simple, repetitive and required precision, there was no potential for automation due to the limitations of the current technology. The advent of vision guided robotics has created all new possibilities to what can be automated; allowing for companies to make multiple products in a quicker, safer and more efficient way. Cost savings are created by lowering the amount of unneeded components, creating more product, decreasing injury risk for employees, and offering a more consistent product to the consumer. Combine these benefits with the fact that VGRS can be deployed in places where robotic automation was previously unthinkable, and it becomes apparent that this will have a powerful effect on manufacturing practices as well as loss prevention.

5. Insuring Employee Safety

There are two types of risks that an employer must aim to protect their workers from, one is an acute injury and the other is a chronic injury. Acute injury is one in which the injury is sustained in the workplace during an event. These injuries can be severe in nature and can create lifelong debilitation for the employee. Chronic injuries are those where by the employee incurs small repeated injures over many years culminating in the incurment of a debilitating injury. Both of these events can be solved by utilizing automated robotics. Repetitive dangerous manual tasks in manufacturing or handling can now be carried out by vision guided robotic arms, freeing up employees to grow in other parts of a more productive company. Negative ergonomic impacts can be done away with, lengthening an employee's career and allowing employers to reap the benefits of a more experienced and able bodied workforce. Collaborative robots have been designed with safety in mind. The days of the caged robotic are ending as a new generation of robotic arms come equipped with new safety protocols and constantly improving algorithms allowing for sophisticated spatial awareness. Many robots are now available with collision detection, allowing them to work alongside other robots without the fear of a major collision. If a collision occurs with a surface, a robot, or an employee, the robotic arm simply stops moving. New laser systems can create a perimeter around the robot insuring that as an employee approaches the robotic arm slows its workspeed down in relation to the workers proximity, culminating in a shutdown before the worker enters the robot's immediate workspace.

6. Quality Assurance with Traceability

In today's manufacturing world the importance tracking and documenting a product throughout its manufacturing process is undeniable. The demands on quality management tend to increase year-to-year. A defective product not only affects a company's relationship with a customer, it also hurts their brand and costs them money. Unfortunately it can be difficult to pinpoint where or how a faulty product was created. Machine vision technology now offers a simple solution to such problems by offering traceability. Traceability is the ability to verify the history, location and application of an item. This allows for business owners to pinpoint a faulty product and determine its exact route to the customer. When a camera scans a product's barcode or product number, it can also take a video or picture of the product creating a history of the item for every step of its journey. This information is stored in a database for later reference. When integrated with cooperative robotic arms, bad parts can be scanned photographed, separated from the good inventory and place in a separate container allowing for operators to determine if the product has a fixable deficiency. If fixable, the now corrected product can continue its journey to the customer with the history of the original fail and the later pass still in the computer records. Traceability is becoming indispensable as a system for management and operation. It allows for the increase in quality, the reduction of overhead and the guarantee of safety.

Application Example

The possibilities for this recent improvement in robotic arm technology has many applications that previously would have been considered unimaginable. This is coupled with a new emphasis on ease of use. Robot manufacturers have added operator friendly GUIs for the robot and the controller. Ethernet connections have been utilized to allow for quick robotic communication with other robots and any other device on the factory network. In this section a handful of the many current applications for this technology will be discussed.

Safely Palletizing products with humans working closely

In the past robotic arms posed a threat to worker safety prompting fencing to be constructed around the robot in an attempt to keep humans out of its workspace. With new collaborative robots this is not an issue as protocols allow for the robot to cease action when sensing human contact.

This allows for humans and robots to work side by side in many different applications. For example the vision system can be set to recognize the product on a conveyor belt, lift them and place them in packaging. Once the package is full the robot can activate a switch moving the pallet down the line while setting a new pallet. Then the robot will start the process over. A few feet away a worker can be collecting or restocking pallets in complete safety. This is known as a pick and place application. It requires only a minimum resolution image depending on the size of the object and can be done using a black and white camera as the object's position is the only piece of information of importance.

Sorting Multiple Items with Multiple Orientations

One vision guided robotic system can differentiate between multiple items in multiple orientations allowing for advanced sorting techniques previously unavailable in the market. VGRS can also provide flexibility that is essential when dealing with unknown part sizes or different parts entirely that may be produced or sorted on the same production line.

A manufacturing warehouse could have multiple CNC machines producing different parts. These parts require a deburring cycle. Normally parts will go through this cycle with groups of like parts or an employee would be forced to sort the pieces after deburring. With VGRS all pieces produced during manufacturing can be deburred together and placed in a robot work zone where the finished pieces will be sorted. This allows for a much quicker machining process while freeing up employees to tend to more important tasks. In this example a black and white camera can be used. The needed resolution depends on the size of the parts and the similarities between different parts. The more similar two parts are, the better resolution camera needed to differentiate them.

Sorting Multiple Items with Multiple Orientations

Products can now be imaged in 3 dimensions and displayed on a monitor, using the auto teach function any items that have discrepancies from a good part that fall out of the user programmed accepted tolerance level will be red flagged, at which point the robotic arm will remove the part from the assembly line. This allows for unprecedented quality control and can allow in-depth inspections of molding, welding, soldering, microprocessor boards, credit cards, tires and much more.

Quality Control using Lumitrax

LumiTrax is a cutting edge vision system produced by Keyence. In the set up a high speed camera is used in concert with intelligent lighting and an advanced inspection algorithm. The new capturing method analyzes multiple images acquired with lighting from different directions to create shape (surface irregularities) and texture (pattern) images. This allows for inspections that can disregard the surface design and search for stains, flaws or numbers on the surface of the product, or it can be used to remove glare and specular reflection.

Quality Control using AutoTeach

The AutoTeach tool allows the controller to gain an image of a good product. After calibration of a minimum of 30 good products the software can then determine how much a product varies from the original good products. Certain variations can be omitted in prefered.

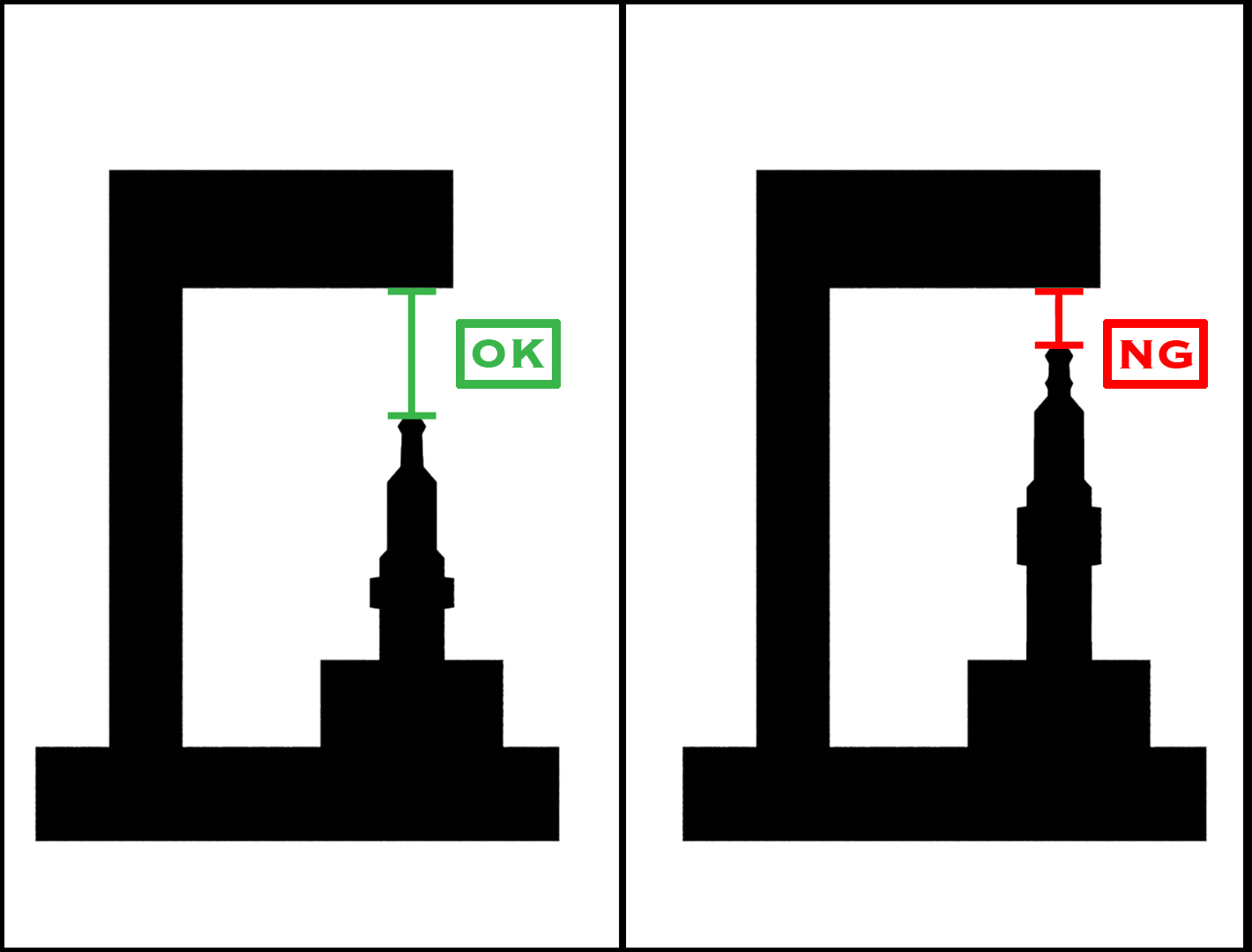

Quality Control using Backlit Measurement

In particular cases an accurate space within a product is needed , in the past workers were required to measure these areas for each product produced. This would bottleneck the entire manufacturing process. Now using a measurement tool coupled with backlighting this can be accomplished very easily.

For example, the production of spark plugs is an almost entirely mechanized process. At the end of this process the spark plugs must go through quality control to ensure that the gap height between the anode and cathode are up to standards. Currently this job is done manually by workers. This slows down an otherwise very uniform process. By backlighting the spark plug, using a powerful 21 megapixel camera and utilizing the measurement confirmation tool, this part of production can be mechanized removing the bottleneck in production.

Traceability

The ability to trace a product's journey to the customer will prove extremely valuable to small and large companies alike. It will offer the ability to zoom in on trouble areas in the production process as well as offer insight into parts of the process that can be refined and sped up.

One application could be inspecting yogurt containers on a conveyor belt. As one camera reads the barcode a second camera verifies the quality of the label and packaging. This inspection will elicit a pass or fail rating grounded in the manufacturer's range of acceptability for tier products. This information is stored on the computer and can be reference with the units barcode. The camera reading the barcode can be a line scan camera but the camera confirming the quality of the product must be of good quality and must be in color.

Summary

A new generation of accurate vision systems are now available at cost effective rates. These vision systems come equipped with user-friendly software and intuitive GUI in an attempt to allow flexibility and simple reprogrammability. Now that the cost of implementing VGRS have dropped to a more attainable position, during a period that has seen the versatility of the tool grow, it is now positioned as a true value for manufacturers and end users alike.